Backpropagation explained | Part 4 - Calculating the gradient

text

Backpropagation explained | Part 4 - Calculating the gradient

Hey, what's going on everyone? In this episode, we're finally going to see how backpropagation calculates the gradient of the loss function with respect to the weights in a neural network.

So let's get to it.

Our Task

We're now on episode number four in our journey through understanding backpropagation. In the last episode, we focused on how we can mathematically express certain facts about the training process.

Now we're going to be using these expressions to help us differentiate the loss of the neural network with respect to the weights.

Recall from the episode that covered the intuition for backpropagation that for stochastic gradient descent to update the weights of the network, it first needs to calculate the gradient of the loss with respect to these weights.

Calculating this gradient is exactly what we'll be focusing on in this episode.

We're first going to start out by checking out the equation that backprop uses to differentiate the loss with respect to weights in the network.

Then, we'll see that this equation is made up of multiple terms. This will allow us to break down and focus on each of these terms individually.

Lastly, we'll take the results from each term and combine them to obtain the final result, which will be the gradient of the loss function.

Alright, let's begin.

Derivative of the Loss Function with Respect to the Weights

Let's look at a single weight that connects node \(2\) in layer \(L-1\) to node \(1\) in layer \(L\).

This weight is denoted as

The derivative of the loss \( C_{0} \) with respect to this particular weight \( w_{12}^{(L)} \) is denoted as

Since \( C_{0} \) depends on \( a_{1}^{\left( L\right) }\text{,} \) and \( a_{1}^{\left( L\right) } \) depends on \( z_{1}^{(L)}\text{,}\) and \( z_{1}^{(L)} \) depends on \( w_{12}^{(L)}\text{,} \) the chain rule tells us that to differentiate \( C_{0} \) with respect to \( w_{12}^{(L)}\text{,} \) we take the product of the derivatives of the composed function.

This is expressed as

Let's break down each term from the expression on the right hand side of the above equation.

The first term: \( \frac{\partial C_{0}}{\partial a_{1}^{(L)}} \)

We know that

Therefore,

Expanding the sum, we see

Observe that the loss from the network for a single input sample will respond to a small change in the activation output from node \( 1 \) in layer \( L \) by an amount equal to two times the difference of the activation output \(a_{1}\) for node \( 1 \) and the desired output \( y_{1} \) for node \( 1 \).

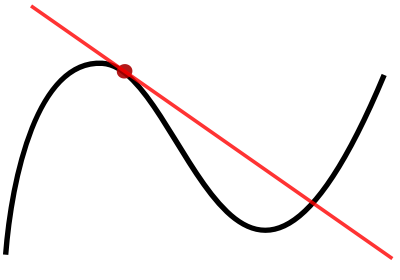

The second term: \( \frac{\partial a_{1}^{(L)}}{\partial z_{1}^{(L)}} \)

We know that for each node \( j \) in the output layer \( L \), we have

and since \( j=1 \), we have

Therefore,

Therefore, this is just the direct derivative of \( a_{1}^{(L)} \) since \( a_{1}^{(L)} \) is a direct function of \( z_{1}^{\left(L\right)} \).

The third term: \( \frac{\partial z_{1}^{(L)}}{\partial w_{12}^{(L)}} \)

We know that, for each node \( j \) in the output layer \( L \), we have

Since \( j=1 \), we have

Therefore,

Expanding the sum, we see

The input for node \( 1 \) in layer \( L \) will respond to a change in the weight \( w_{12}^{(L)} \) by an amount equal to the activation output for node \( 2 \) in the previous layer, \( L-1 \).

Combining terms

Combining all terms, we have

We Conclude

We've seen how to calculate the derivative of the loss with respect to one individual weight for one individual training sample.

To calculate the derivative of the loss with respect to this same particular weight, \( w_{12} \), for all \( n \) training samples, we calculate the average derivative of the loss function over all \( n \) training samples.

This can be expressed as

We would then do this same process for each weight in the network to calculate the derivative of \( C \) with respect to each weight.

Wrapping Up

At this point, we should now understand mathematically how backpropagation calculates the gradient of the loss with respect to the weights in the network.

We should also have a solid grip on all of the intermediate steps needed to do this calculation, and we should now be able to generalize the result we obtained for a single weight and a single sample to all the weights in the network for all training samples.

Now, we still haven't hit the point completely home by discussing the math that underlies the backwards movement of backpropagation that we discussed whenever we covered the intuition for backpropagation. Don't worry, we'll be doing that in the next episode. Thanks for reading. See you next time.

quiz

resources

updates

Committed by on

DEEPLIZARD

Message

DEEPLIZARD

Message