video

Deep Learning Course - Level: Beginner

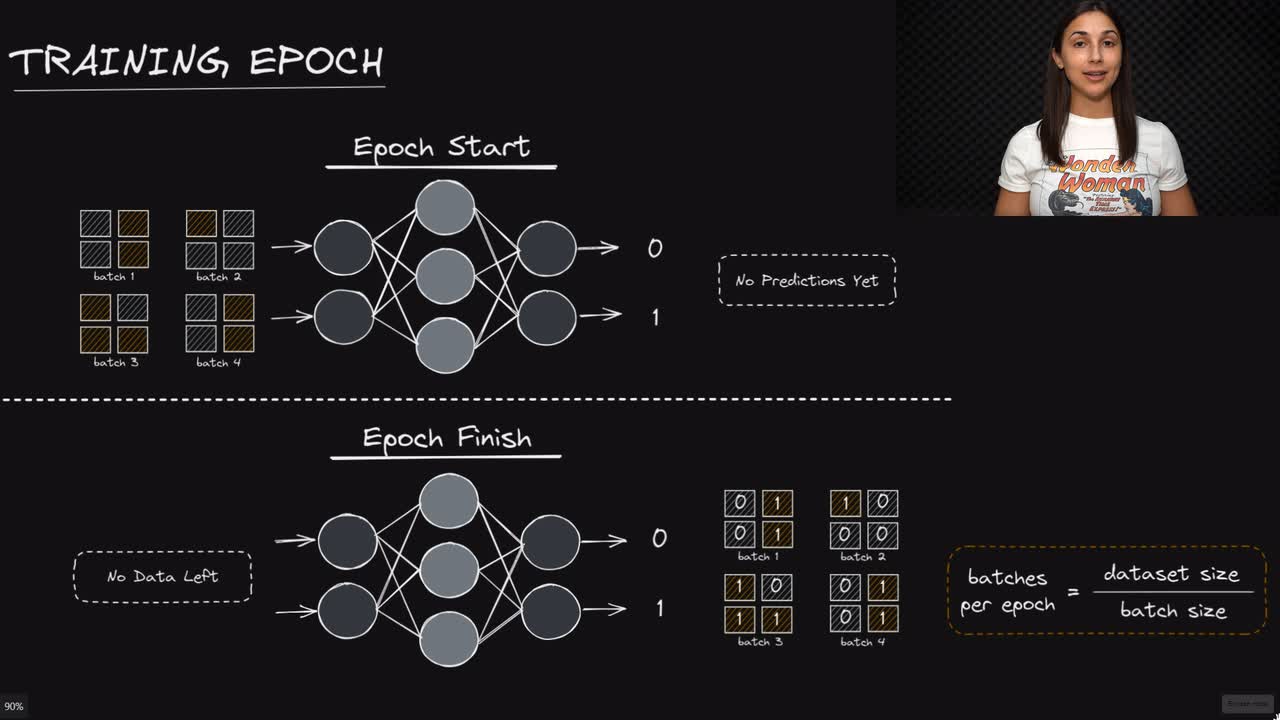

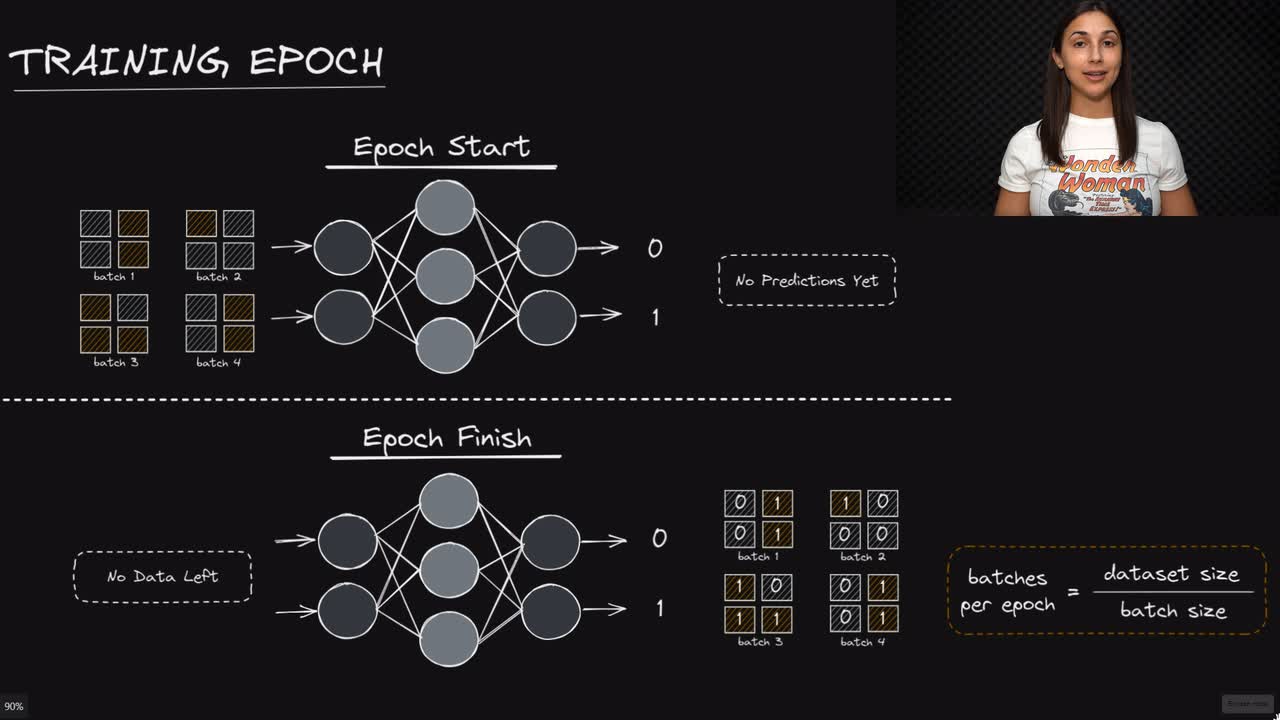

When we train a network, we need a way to specify how long we want to train. We don't measure this in terms of time, but rather in terms of how many times we pass the batched data set to the network.

An epoch is one single pass of the entire data set to the network. In other words, when each batch in the data set has been passed to the network during training exactly once, we say that one epoch has completed.

Committed by on