video

Deep Learning Course - Level: Beginner

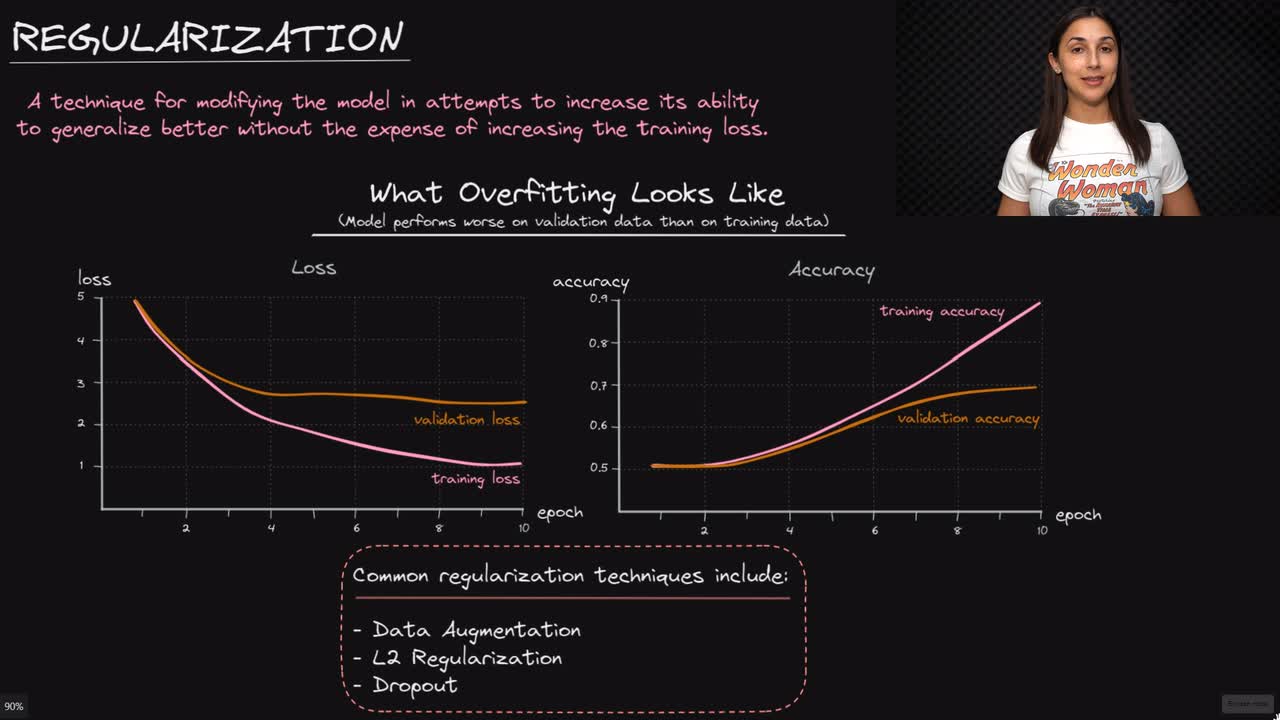

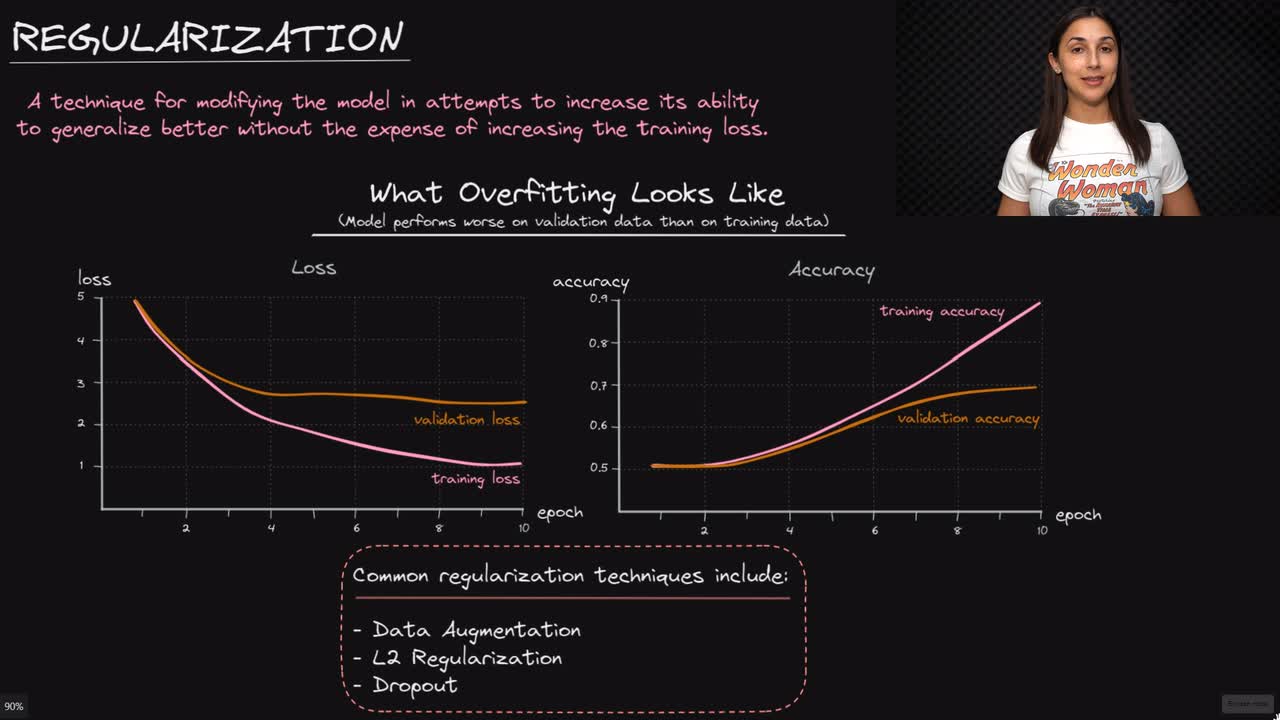

Generally, regularization is any technique used to modify the model, or the learning algorithm in general, in attempts to increase its ability to generalize better without the expense of increasing the training loss.

In other words, regularization techniques are deployed in attempts to reduce overfitting without introducing significant underfitting.

Committed by on