video

Deep Learning Course - Level: Beginner

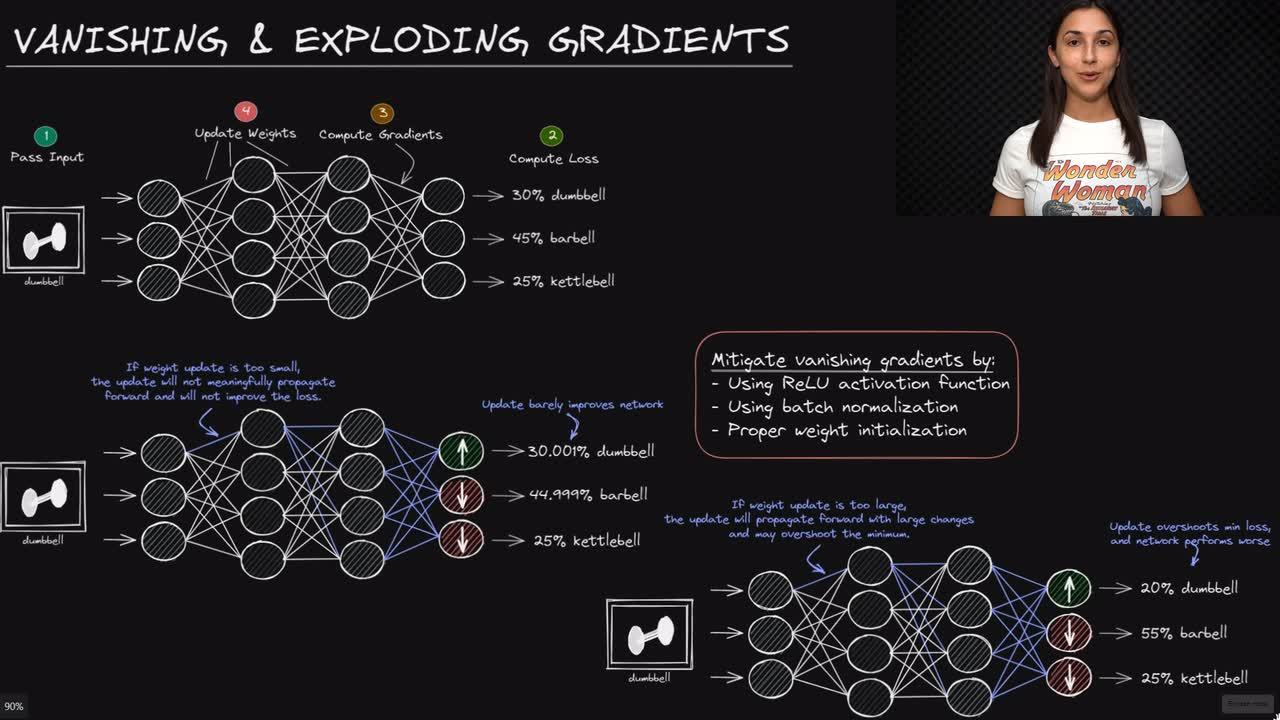

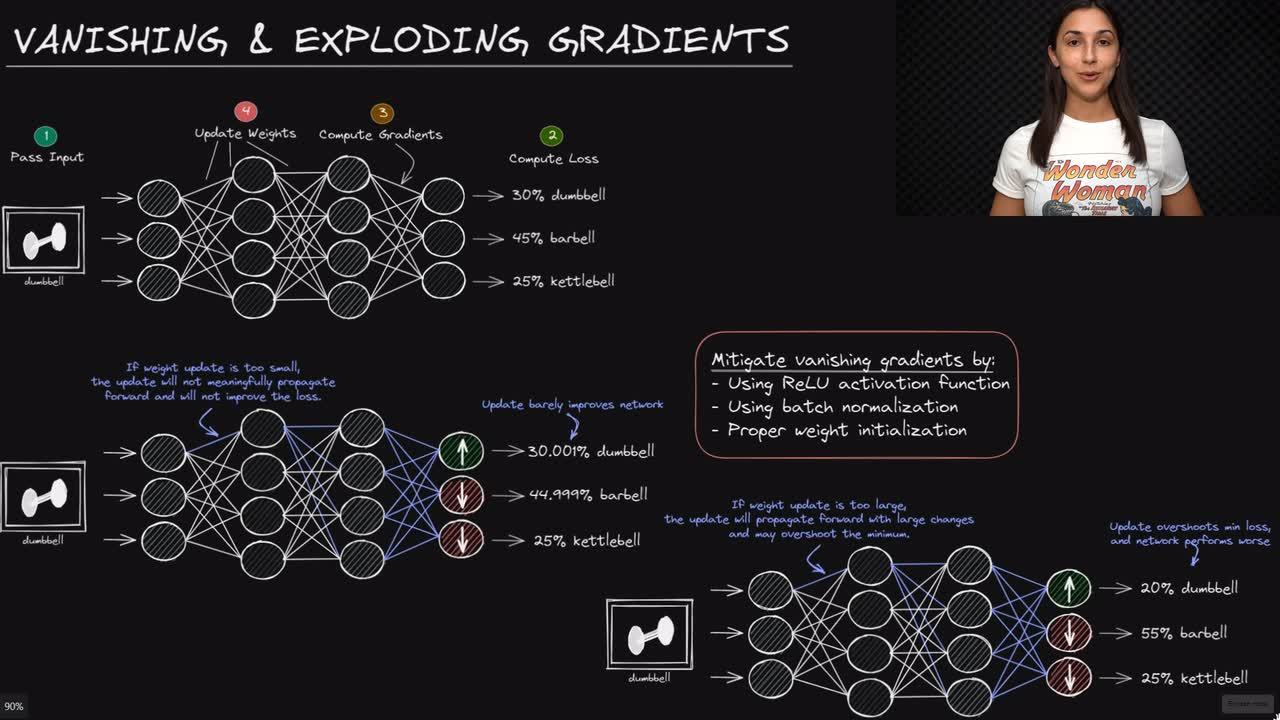

The vanishing gradient problem is a problem that occurs during neural network training regarding unstable gradients and is a result of the backpropagation algorithm used to calculate the gradients.

During training, the gradient descent optimizer calculates the gradient of the loss with respect to each of the weights and biases in the network. This calculation is performed via backpropagation.

Committed by on