TensorFlow.js - Introducing deep learning with client-side neural networks

text

Client-side artificial neural networks

In this post, we're going to introduce the concept of client-side artificial neural networks, which will lead us to deploying and running models, along with our full deep learning applications, in the browser!

To implement this cool capability, we'll be using TensorFlow.js, TensorFlow's JavaScript library, which will allow us to, well, build and access models in JavaScript.

I'm super excited to cover this, so let's get to it.

What makes TensorFlow.js different?

So, client-side neural networks?! Running models in the browser?! To be able to appreciate the coolness factor of this, we're going to need some context so that we can contrast what we've historically been able to do from a deployment perspective to what we can do now with client-side neural networks.

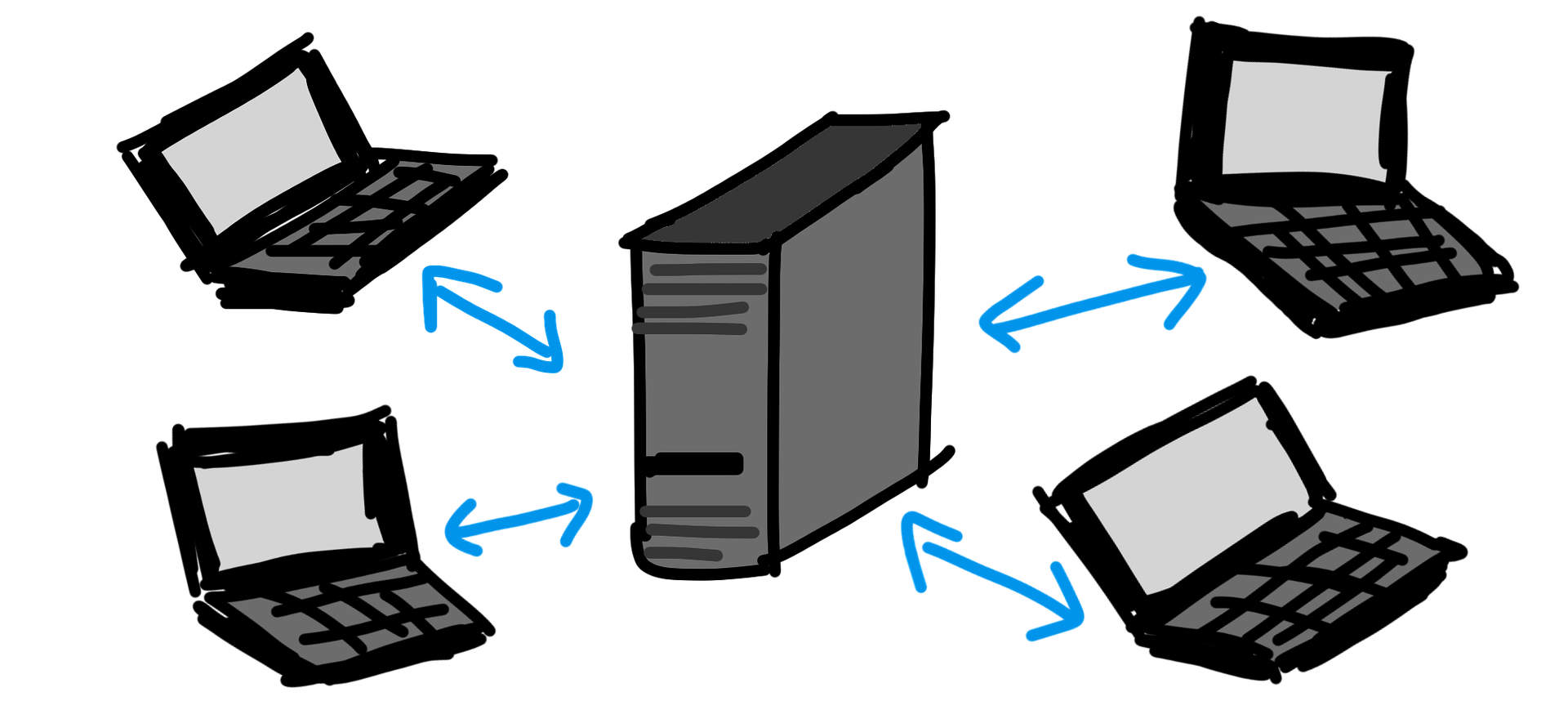

Client-Server Architecture

As we've seen in our previous series on deploying neural networks, in order to deploy a deep learning application, we need to bring two things together: the model and the data.

To make this happen, we'll normally see this: We have a front-end application, say a web app running in the browser, that a user can interact with to supply data. Then, we have a back-end application, a web service, where our model is loaded and running.

When the user supplies the data to the front-end application, that web app will make a call to the back-end application and post the data to the model.

The model will then do it's thing, like make a prediction, and then it will return its prediction back to the web application, which will then be supplied to the user.

That's the usual story of how we bring the model and the data together, and we've already gone over all the details for how to actually implement this type of deployment.

Be sure to check out that series I mentioned earlier if you haven't already.

No network calls to get a prediction (client-side)

Now, with client-side neural network deployment, we have no back-end.

The model isn't sitting on a server somewhere waiting to be called by front-end apps, but rather, the model is embedded inside of the front-end application. What does that mean? Well, for a web app, that means the model is running right inside of the browser.

In our example scenario that we went over a couple moments ago, rather than the data being sent across the network or across the internet from the front-end application to the model running in the back-end, the data and the model are together from the start within the browser.

Why move to the client-side?

Ok, so this is cool and everything, but what's the point?

- Keep user data local

- No prerequisites or installs

- Interactive and easy to use

Let's look at each of these in more detail.

Keep user data local

For one, users get to keep their data local to their machines or devices since the model is running on the local device as well. If user data isn't having to travel across the internet, we can say that's a plus, and there's more on this concern in the previous series I mentioned.

No prerequisites or installs

Additionally, when we develop something that runs in a front-end application, like in the browser, that means that anyone can use it. Anyone can interact with it as long as they can browse to the link our application is running on. There's no prerequisites or installs needed.

Interactive and easy to use

In general, a browser application is highly interactive and easy to use, so from a user perspective, it's really simple to get started and engage with the app.

The caveats

This is all great stuff, but just as there are considerations to address with the traditional deployment implementation, there are a couple of caveats for the client-side deployment implementation as well. Don't let that run you off. There's just a few things to consider.

As we're talking about these, I'm going to play some demos of open source projects (see video) that have been created with TensorFlow.js just so you can get an idea of some of the cool things we can do with client-side neural networks. Links to all of them are in the description.

For one, we have to consider the size of our models. Since our models will be loaded and running in the browser, you can imagine that loading a massive model into our web application might cause some issues.

30 MB in size or less

TensorFlow.js suggests using models that are 30 MB in size or less. To give some perspective, the VGG16 model is over 500 MB! So, what would happen if we tried to run that sucker

in the browser? We're actually going to demo that in a future video, so stay tuned.

Alright, then what types of models are good to run in the browser? Well, smaller ones. Ones that have been created with the intention to run on smaller, or lower powered devices, like phones.

What types of models have we seen that are incredibly powerful for this? MobileNets! So we'll also be seeing how a MobileNet model holds up to being deployed in the browser as well.

Neural network capabilities in the browser

Once we have a model up and running in the browser, can we do anything we could ordinarily otherwise do with a model?

Using TensorFlow.js, pretty much. But would we want to do everything with a model running in the browser? That's another question, and the answer is probably not.

Having our models run in the browser is best suited for tasks like fine-tuning pre-trained models, or most popularly, inference.

Using a model to get predictions on new data, and this task is exactly what we saw with the project we worked on in the Keras model deployment series using Flask.

While building new models and training models from scratch can also be done in the browser, these tasks are usually better addressed using other APIs, like Keras or standard TensorFlow in Python.

Now that we have an idea of what it means to deploy our model to a client-side application, why we'd want to do this, and what types of specific things we'd likely use this for, let's get set to start coding and implementing applications for this in the next posts of this series. I'll see ya in the next one!

quiz

resources

updates

Committed by on

DEEPLIZARD

Message

DEEPLIZARD

Message