Semi-supervised Learning explained

text

Semi-supervised learning for machine learning

In this post, we'll be discussing the concept of semi-supervised learning. Let's get right to it!

Introducing semi-supervised learning

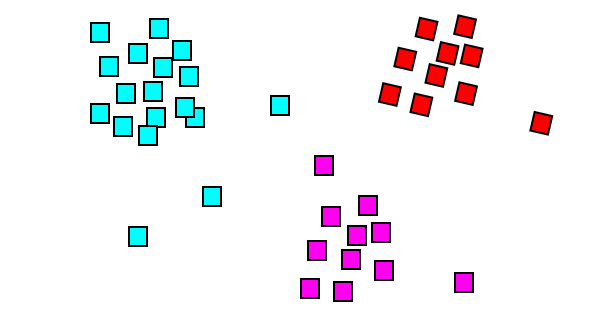

Semi-supervised learning kind of takes a middle ground between supervised learning and unsupervised learning.

As a quick refresher, recall from previous posts that supervised learning is the learning that occurs during training of an artificial neural network when the data in our training set is labeled. Unsupervised learning, on the other hand, is the learning that occurs when the data in our training set is not labeled. Now, onto semi-supervised learning.

Semi-supervised learning uses a combination of supervised and unsupervised learning techniques, and that's because, in a scenario where we'd make use of semi-supervised learning, we would have a combination of both labeled and unlabeled data.

Let's expand on this idea with an example.

Large unlabeled dataset

Suppose we have access to a large unlabeled dataset that we'd like to train a model on and that manually labeling all of this data ourselves is just not practical.

Well, we could go through and manually label some portion of this large data set ourselves and use that portion to train our model.

This is fine. In fact, this is how a lot of data used for neural networks becomes labeled. However, if we have access to large amounts of data, and we've only labeled some small portion of this data, then what a waste it would be to just leave all the other unlabeled data on the table.

I mean, after all, we know the more data we have to train a model, the better and more robust our model will be. What can we do to make use of the remaining unlabeled data in our data set?

Well, one thing we can do is implement a technique that falls under the category of semi-supervised learning called pseudo-labeling.

Pseudo-labeling

This is how pseudo-labeling works. As just mentioned, we've already labeled some portion of our data set. Now, we're going to use this labeled data as the training set for our model. We're then going to train our model, just as we would with any other labeled data set.

Just through the regular training process, we get our model performing pretty well, and so everything we've done up to this point has been regular old supervised learning in practice.

Now here's where the unsupervised learning piece comes into play. After we've trained our model on the labeled portion of the data set, we then use our model to predict on the remaining unlabeled portion of data, and we then take these predictions and label each piece of unlabeled data with the individual outputs that were predicted for them.

This process of labeling the unlabeled data with the output that was predicted by our neural network is the very essence of pseudo-labeling.

After labeling the unlabeled data through this pseudo-labeling process, we train our model on the full dataset, which is now comprised of both the data that was actually truly labeled along with the data that was pseudo labeled.

We're also able to train on data that otherwise may have potentially taken many tedious hours of human labor to manually label the data.

As we can imagine, sometimes the cost of acquiring or generating a fully labeled data set is just too high, or the pure act of generating all the labels itself is just not feasible.

Through this process, we can see how this approach makes use of both supervised learning, with the labeled data, and unsupervised learning, with the unlabeled data, which together give us the practice of semi-supervised learning.

Wrapping up

We should now have an understanding of what semi-supervised learning is, and how we can apply it in practice through the use of pseudo-labeling. I'll see you in the next one!

quiz

resources

updates

Committed by on

DEEPLIZARD

Message

DEEPLIZARD

Message