Creating PyTorch Tensors for Deep Learning - Best Options

text

Creating PyTorch Tensors - Best Options

Welcome back to this series on neural network programming with PyTorch. In this post, we will look closely at the differences between the primary ways of transforming data into PyTorch tensors.

By the end of this post, we'll know the differences between the primary options as well as which options should be used and when. Without further ado, let's get started.

PyTorch tensors as we have seen are instances of the torch.Tensor PyTorch class. The difference between the abstract concept of a tensor and a PyTorch tensor is that PyTorch tensors give us

a concrete implementation that we can work with in code.

In the

last post, we saw how to create tensors in PyTorch using data like Python lists, sequences and NumPy ndarrays. Given a

numpy.ndarray, we found that there are four ways to create a

torch.Tensor object.

Here is a quick recap:

> data = np.array([1,2,3])

> type(data)

numpy.ndarray

> o1 = torch.Tensor(data)

> o2 = torch.tensor(data)

> o3 = torch.as_tensor(data)

> o4 = torch.from_numpy(data)

> print(o1)

tensor([1., 2., 3.])

> print(o2)

tensor([1, 2, 3], dtype=torch.int32)

> print(o3)

tensor([1, 2, 3], dtype=torch.int32)

> print(o4)

tensor([1, 2, 3], dtype=torch.int32)

Our task in this post is to explore the difference between these options and to suggest a best option for our tensor creation needs.

Numpy dtype Behavior on Different Systems

Depending on your machine and operating system, it is possible that your dtype may be different from what is shown here and in the video.

Numpy sets its default dtype based on whether it's running on a 32-bit or 64-bit system, and the behavior also differs on Windows systems.

This

link provides further information regrading the difference seen on Windows systems. The affected methods are: tensor, as_tensor, and from_numpy.

Thank you to David from the hivemind for figuring this out!

Tensor creation operations: What's the difference?

Let's get started and figure out what these differences are all about.

Uppercase/lowercase:

torch.Tensor() vs

torch.tensor()

Notice how the first option

torch.Tensor() has an uppercase T while the second option

torch.tensor() has a lowercase t. What's up with this difference?

The first option with the uppercase

T is the constructor of the

torch.Tensor class, and the second option is what we call a

factory function that constructs

torch.Tensor objects and returns them to the caller.

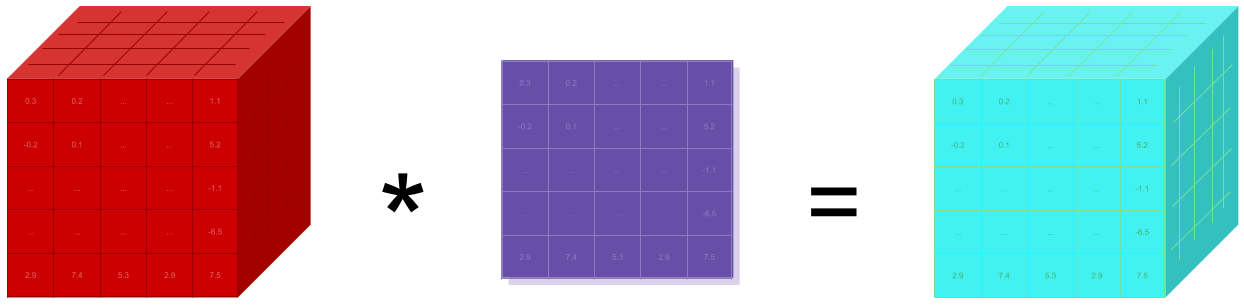

You can think of the torch.tensor() function as a factory that builds tensors given some parameter inputs. Factory functions are a software design pattern for creating objects. If you want to

read more about it check

here.

Okay. That's the difference between the uppercase

T and the lower case

t, but which way is better between these two? The answer is that it's fine to use either one. However, the factory function

torch.tensor() has better documentation and more configuration options, so it gets the winning spot at the moment.

default

dtype vs inferred

dtype

Alright, before we knock the torch.Tensor() constructor off our list in terms of use, let's go over the difference we observed in the printed tensor outputs.

The difference is in the

dtype of each tensor. Let's have a look:

> print(o1.dtype)

torch.float32

> print(o2.dtype)

torch.int32

> print(o3.dtype)

torch.int32

> print(o4.dtype)

torch.int32

The difference here arises in the fact that the torch.Tensor() constructor uses the default dtype when building the tensor. We can verify the default dtype using the

torch.get_default_dtype() method:

> torch.get_default_dtype() torch.float32

To verify with code, we can do this:

> o1.dtype == torch.get_default_dtype()

True

The other calls choose a dtype based on the incoming data. This is called

type inference. The dtype is inferred based on the incoming data. Note that the dtype can also be explicitly set for these calls by specifying the dtype as an argument:

> torch.tensor(data, dtype=torch.float32) > torch.as_tensor(data, dtype=torch.float32)

With torch.Tensor(), we are unable to pass a dtype to the constructor. This is an example of the torch.Tensor() constructor lacking in configuration options. This is

one of the reasons to go with the torch.tensor() factory function for creating our tensors.

Let's look at the last hidden difference between these alternative creation methods.

Sharing memory for performance: copy vs share

The third difference is lurking behind the scenes or underneath the hood. To reveal the difference, we need to make a change to the original input data in the

numpy.ndarray after using the ndarray to create our tensors.

Let's do this and see what we get:

> print('old:', data)

old: [1 2 3]

> data[0] = 0

> print('new:', data)

new: [0 2 3]

> print(o1)

tensor([1., 2., 3.])

> print(o2)

tensor([1, 2, 3], dtype=torch.int32)

> print(o3)

tensor([0, 2, 3], dtype=torch.int32)

> print(o4)

tensor([0, 2, 3], dtype=torch.int32)

Note that originally, we had data[0]=1, and also note that we only changed the data in the original

numpy.ndarray. Notice we didn't explicity make any changes to our tensors (o1, o2, o3, o4).

However, after setting data[0]=0, we can see some of our tensors have changes. The first two o1 and o2 still have the original value of 1 for index

0, while the second two o3 and o4 have the new value of 0 for index 0.

This happens because torch.Tensor() and torch.tensor()

copy their input data while torch.as_tensor() and torch.from_numpy()

share

their input data in memory with the original input object.

| Share Data | Copy Data |

|---|---|

| torch.as_tensor() | torch.tensor() |

| torch.from_numpy() | torch.Tensor() |

This sharing just means that the actual data in memory exists in a single place. As a result, any changes that occur in the underlying data will be reflected in both objects, the

torch.Tensor and the

numpy.ndarray.

Sharing data is more efficient and uses less memory than copying data because the data is not written to two locations in memory.

If we have a

torch.Tensor and we want to convert it to a

numpy.ndarray, we do it like so:

> print(o3.numpy())

[0 2 3]

> print(o4.numpy())

[0 2 3]

This gives:

> print(type(o3.numpy()))

<class 'numpy.ndarray'>

> print(type(o4.numpy()))

<class 'numpy.ndarray'>

This establishes that torch.as_tensor() and torch.from_numpy() both share memory with their input data. However, which one should we use, and how are they different?

The torch.from_numpy() function only accepts

numpy.ndarrays, while the torch.as_tensor() function accepts a wide variety of

array-like objects including other PyTorch tensors. For this reason,

torch.as_tensor() is the winning choice in the memory sharing game.

Best options for creating tensors in PyTorch

Given all of these details, these two are the best options:

-

torch.tensor() -

torch.as_tensor()

The torch.tensor() call is the sort of go-to call, while torch.as_tensor() should be employed when tuning our code for performance.

Some things to keep in mind about memory sharing (it works where it can):

-

Since

numpy.ndarrayobjects are allocated on the CPU, theas_tensor()function must copy the data from the CPU to the GPU when a GPU is being used. -

The memory sharing of

as_tensor()doesn't work with built-in Python data structures like lists. -

The

as_tensor()call requires developer knowledge of the sharing feature. This is necessary so we don't inadvertently make an unwanted change in the underlying data without realizing the change impacts multiple objects. -

The

as_tensor()performance improvement will be greater if there are a lot of back and forth operations betweennumpy.ndarrayobjects and tensor objects. However, if there is just a single load operation, there shouldn't be much impact from a performance perspective.

Wrapping up

At this point, we should now have a better understanding of the PyTorch tensor creation options. We've learned about factory functions and we've seen how memory

sharing vs copying

can impact performance and program behavior. I'll see you in the

next one!

quiz

resources

updates

Committed by on

DEEPLIZARD

Message

DEEPLIZARD

Message