Rank, Axes, and Shape Explained - Tensors for Deep Learning

text

Rank, Axes and Shape - Tensors for deep learning

Welcome back to this series on neural network programming with PyTorch. In this post, we will dig in deeper with tensors and introduce three fundamental tensor attributes, rank, axes, and shape. Without further ado, let's get started.

The concepts of rank, axes, and shape are the tensor attributes that will concern us most in deep learning.

- Rank

- Axes

- Shape

The rank, axes, and shape are three tensor attributes that will concern us most when starting out with tensors in deep learning. These concepts build on one another starting with rank, then axes, and building up to shape, so keep any eye out for this relationship between these three.

Together rank, axes, and shape are all fundamentally connected to the concept of indexes that we discussed in the previous post. If you haven't seen that one, I highly recommend you check it out. Let's kick things off starting at the ground floor by introducing the rank of a tensor.

Rank of a tensor

The rank of a tensor refers to the number of dimensions present within the tensor. Suppose we are told that we have a rank-2 tensor. This means all of the following:

- We have a matrix

- We have a 2d-array

- We have a 2d-tensor

We are introducing the word rank here because it is commonly used in deep learning when referring to the number of dimensions present within a given tensor. This is just another one of the instances where different areas of study use different words to refer to the same concept. Don't let it throw you off!

Rank and indexes

The rank of a tensor tells us how many indexes are required to access (refer to) a specific data element contained within the tensor data structure.

Let's build on the concept of rank by looking at the axes of a tensor.

Axes of a tensor

If we have a tensor, and we want to refer to a specific dimension, we use the word axis in deep learning.

If we say that a tensor is a rank 2 tensor, we mean that the tensor has 2 dimensions, or equivalently, the tensor has two axes.

Elements are said to exist or run along an axis. This running is constrained by the length of each axis. Let's look at the length of an axis now.

Length of an axis

The length of each axis tells us how many indexes are available along each axis.

Suppose we have a tensor called t, and we know that the first axis has a length of three while the second axis has a length of four.

Since the first axis has a length of three, this means that we can index three positions along the first axis like so:

t[0]

t[1]

t[2]

All of these indexes are valid, but we can't move passed index 2.

Since the second axis has a length of four, we can index four positions along the second axis. This is possible for each index of the first axis, so we have

t[0][0]

t[1][0]

t[2][0]

t[0][1]

t[1][1]

t[2][1]

t[0][2]

t[1][2]

t[2][2]

t[0][3]

t[1][3]

t[2][3]

Tensor axes example

Let's look at some examples to make this solid. We'll consider the same tensor dd as before:

> dd = [

[1,2,3],

[4,5,6],

[7,8,9]

]

Each element along the first axis, is an array:

> dd[0]

[1, 2, 3]

> dd[1]

[4, 5, 6]

> dd[2]

[7, 8, 9]

Each element along the second axis, is a number:

> dd[0][0]

1

> dd[1][0]

4

> dd[2][0]

7

> dd[0][1]

2

> dd[1][1]

5

> dd[2][1]

8

> dd[0][2]

3

> dd[1][2]

6

> dd[2][2]

9

Note that, with tensors, the elements of the last axis are always numbers. Every other axis will contain n-dimensional arrays. This is what we see in this example, but this idea generalizes.

The rank of a tensor tells us how many axes a tensor has, and the length of these axes leads us to the very important concept known as the shape of a tensor.

Shape of a tensor

The shape of a tensor is determined by the length of each axis, so if we know the shape of a given tensor, then we know the length of each axis, and this tells us how many indexes are available along each axis.

Let's consider the same tensor dd as before:

> dd = [

[1,2,3],

[4,5,6],

[7,8,9]

]

To work with this tensor's shape, we'll create a torch.Tensor object like so:

> t = torch.tensor(dd)

> t

tensor([

[1, 2, 3],

[4, 5, 6],

[7, 8, 9]

])

> type(t)

torch.Tensor

Now, we have a torch.Tensor object, and so we can ask to see the tensor's shape:

> t.shape

torch.Size([3,3])

This allows us to see the tensor's shape is 3 x 3. Note that, in PyTorch, size and shape of a tensor are the same thing.

The shape of 3 x 3 tells us that each axis of this rank two tensor has a length of 3 which means that we have three indexes available along each axis. Let's look now at why

the shape of a tensor is so important.

A tensor's shape is important

The shape of a tensor is important for a few reasons. The first reason is because the shape allows us to conceptually think about, or even visualize, a tensor. Higher rank tensors become more abstract, and the shape gives us something concrete to think about.

The shape also encodes all of the relevant information about axes, rank, and therefore indexes.

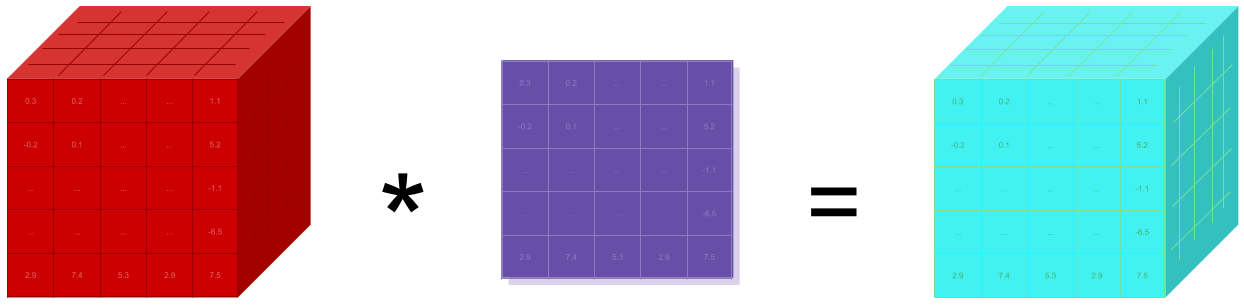

Additionally, one of the types of operations we must perform frequently when we are programming our neural networks is called reshaping.

As our tensors flow through our networks, certain shapes are expected at different points inside the network, and as neural network programmers, it is our job to understand the incoming shape and have the ability to reshape as needed.

Reshaping a tensor

Before we look at reshaping tensors, recall how we reshaped the list of terms we started with earlier:

Shape 6 x 1

- number

- scalar

- array

- vector

- 2d-array

- matrix

Shape 2 x 3

- number, array, 2d-array

- scalar, vector, matrix

Shape 3 x 2

- number, scalar

- array, vector

- 2d-array, matrix

Each of these groups of terms represent the same underlying data only with differing shapes. This is just a little example to motivate the idea of reshaping.

The important take-away from this motivation is that the shape changes the grouping of the terms but does not change the underlying terms themselves.

Let's look at our example tensor dd again:

> t = torch.tensor(dd)

> t

tensor([

[1, 2, 3],

[4, 5, 6],

[7, 8, 9]

])

This torch.Tensor is a rank 2 tensor with a shape of [3,3] or 3 x 3.

Now, suppose we need to reshape t to be of shape [1,9]. This would give us one array along the first axis and nine numbers along the second axis:

> t.reshape(1,9)

tensor([[1, 2, 3, 4, 5, 6, 7, 8, 9]])

> t.reshape(1,9).shape

torch.Size([1, 9])

Now, one thing to notice about reshaping is that the product of the component values in the shape must equal the total number of elements in the tensor.

For example:

- 3 * 3 = 9

- 1 * 9 = 9

This makes it so that there are enough positions inside the tensor data structure to contain all of the original data elements after the reshaping.

This was just a light introduction to tensor reshaping. In a future post, we'll cover the concept in more detail.

Wrapping up

This gives a an introduction to tensors. We should now have a good understanding of tensors and the lingo used to describe them like rank, axes, and shape. Soon, we'll see the various ways we can create tensors in PyTorch. I'll see you in the next one!

quiz

resources

updates

Committed by on

DEEPLIZARD

Message

DEEPLIZARD

Message